Why do we do Continuous Integration?

Continuous Integration is now very much a central process of most agile development efforts, but it hasn’t been around all that long. It may be widely regarded as a “development best practice” but some teams are still waiting to adopt C.I. Seriously, they are.

And it’s not just agile teams that can benefit from C.I. The principles behind good C.I. can apply to any development effort.

This article aims to explain where C.I. came from, why it has become so popular, and why you should adopt it on your development project, whether you’re agile or not.

Back in the Day…

Are you sitting comfortably? I want you to close your eyes, relax, and cast your mind back, waaay back, to 2003 or something like that…

You’re in an office somewhere, people are talking about The Matrix way too much, and there’s an alarming amount of corduroy on show… and developers are checking in code to their source control system….

Suddenly a developer swears violently as he checks out the latest code and finds it doesn’t compile. Someone’s check-in has broken the codebase.

He sets about fixing it and checking it back in.

Suddenly another developer swears violently….

Rinse and repeat.

CI started out as a way of minimising code integration headaches. The idea was, “if it’s painful, don’t put it off, do it more often”. It’s much better to do small and frequent code integrations rather than big ugly ones once in a while. Soon tools were invented to help us do these integrations more easily, and to check that our integrations weren’t breaking anything.

Tests!

Excavations of fossilized C.I. systems from the early 21st Century suggest that these primitive C.I. systems basically just compiled code, and then, when unit tests became more popular, they started running unit tests as well. So every time someone checked in some code, the build would make sure that this integration would still result in a build which would compile, and pass the unit tests. Simple!

C.I. systems then started displaying test results and we started using them to run huuuuge overnight builds which would actually deploy our builds and run integration tests. The C.I. system was the automation centre, it ran all these tasks on a timer, and then provided the feedback – this was usually an email saying what had passed and broken. I think this was an important time in the evolution of C.I. because people started seeing C.I. as more of an information generator, and a communicator, rather than just a techie tool that ran some builds on a regular basis.

Information Generator

Management teams started to get information out of C.I. and so it became an “Enterprise Tool”.

Some processes and “best practices” were identified early on:

- Builds should never be left in a broken state.

- You should never check in on a broken build because it makes troubleshooting and fixing even harder.

With this new-found management buy-in, C.I. became a central tenet of modern development practices.

People started having fun with C.I. plugging lava lamps, traffic lights and talking rabbits into the system. These were fun, but they did something very important in the evolution of C.I. – they turned it into an information radiator and a focal point of development efforts.

Automate Everything!

Automation was the big selling point for C.I. Tasks that would previously have been manual, error-prone and time-consuming could now be done automatically, or at night while we were in bed. For me it meant I didn’t have to come in to work on the weekends and do the builds! Whole suites of acceptance, integration and performance tests could automatically be executed on any given build, on a convenient schedule thanks to our C.I. system. This aspect, as much as any other, helped in the widespread adoption of C.I. because people could put a cost-saving value on it. C.I. could save companies money. I, on the other hand, lost out on my weekend overtime.

Code Quality

Static analysis and code coverage tools appeared all over the place, and were ideally suited to be plugged in to C.I. These days, most code coverage tools are designed specifically to be run via C.I. rather than manually. These tools provided a wealth of feedback to the developers and to the project team as a whole. Suddenly we were able to use our C.I. system to get a real feeling for our project’s quality. The unit test results combined with the static analysis could give us information about the code quality, the integration and functional test results gave us verification of our design and ensured we were making the right stuff, and the nightly performance tests told us that what we were making was good enough for the real world. All of this information got presented to us, automatically, via our new best friend the Continuous Integration system.

Linking C.I. With Stories

When our C.I. system runs our acceptance tests, we’re actually testing to make sure that what we’ve intended to do, has in fact been done. I like the saying that our acceptance tests validate that we built the right thing, while our unit and functional tests verify that we built the thing right.

Linking the ATs to the stories is very important, because then we can start seeing, via the C.I. system, how many of the stories have been completed and pass their acceptance criteria. At this point, the C.I. system becomes a barometer of how complete our projects are.

So, it’s time for a brief recap of what our C.I. system is providing for us at this point:

1. It helps us identify our integration problems at the earliest opportunity

2. It runs our unit tests automatically, saving us time and verifying or code.

3. It runs static analysis, giving us a feel for the code quality and potential hotspots, so it’s an early warning system!

4. It’s an information radiator – it gives us all this information automatically

5. It runs our ATs, ensuring we’re building the right thing and it becomes a barometer of how complete our project is.

And we’re not done yet! We haven’t even started talking about deployments.

Deployments

Ok now we’ve started talking about deployments.

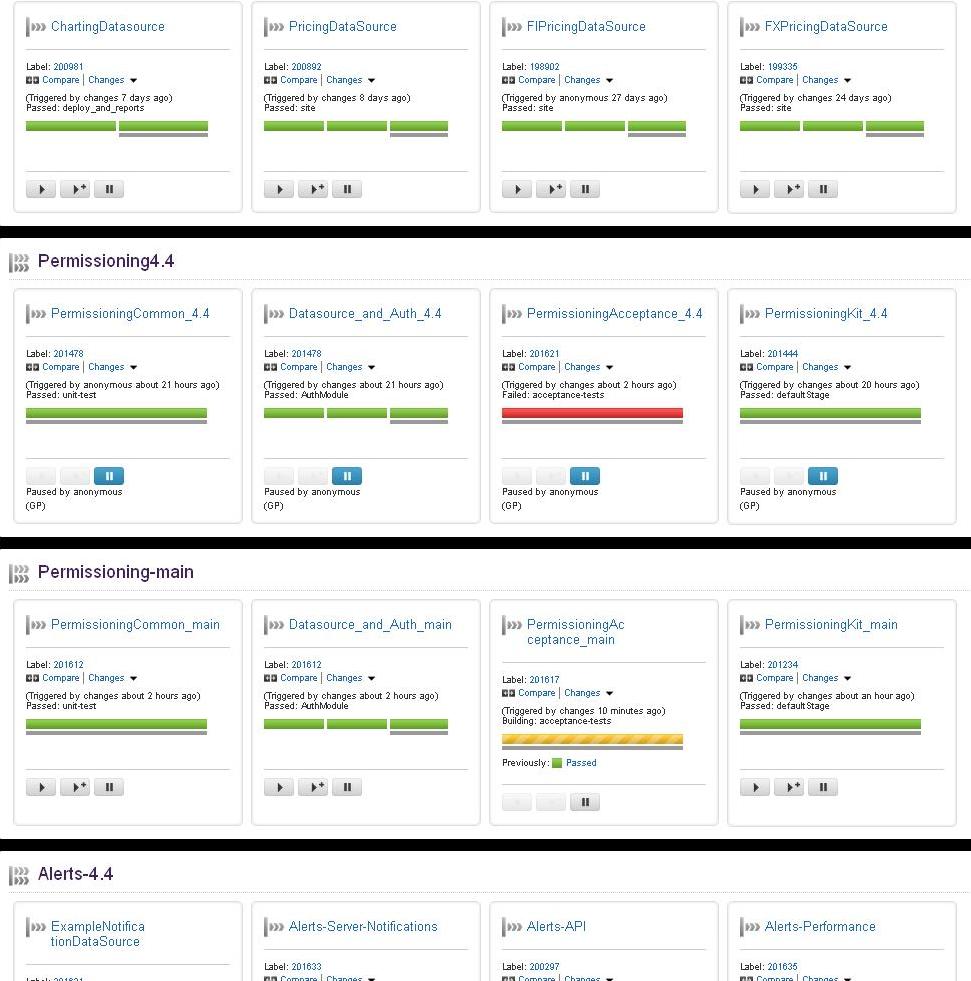

C.I. systems have long been used to deploy builds and execute tests. More recently, with the introduction of advanced C.I. tools such as Jenkins (Hudson), Bamboo and TeamCity, we can use the C.I. tool not only to deploy our builds but to manage deployments to multiple environments, including production. It’s now not uncommon to see a Jenkins build pipeline deploying products to all environments. Driving your production deployments via C.I. is the next logical step in the process, which we’re now calling “Continuous Delivery” (or Continuous Deployment if you’re actually deploying every single build which passes all the test stages etc).

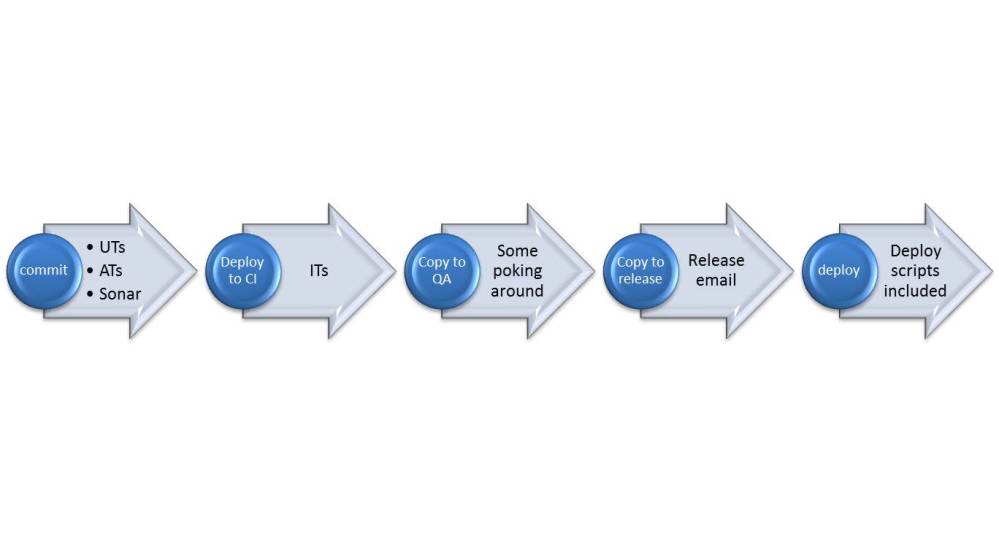

Below is a diagram of the stages in a Continuous Delivery system I worked on recently. The build is automatically promoted to the next stage whenever it successfully completes the current stage, right up until the point where it’s available for deployment to production. As you can imagine, this process relies heavily on automation. The tests must be automated, the deployments automated, even the release email and it’s contents are automated. So what exactly is the cost saving with having a C.I. system?

So what exactly is the cost saving with having a C.I. system?

Yeah, that’s a good question, well done me. Not sure I can give you a straight answer to that one though. Obviously one of the biggest factors is the time savings. As I mentioned earlier, back when I was a human C.I. machine I had to work weekends to sort out build issues and get working code ready for Monday morning. Also, C.I. sort of forces you to automate everything else, like the tests and the deployments, as well as the code analysis and all that good stuff. Again we’re talking about massive time savings.

But automating the hell out of everything doesn’t just save us time, it also eliminates human error. Consider the scope for human error in a system where some poor overworked person has to manually build every project, some other poor sap has to manually do all the testing and then someone else has to manually deploy this project to production and confidently say “Right, now that’s done, I’m sure it’ll work perfectly”. Of course, that never happened, because we were all making mistakes along the line, and they invariably came to light when the code was already live. How much time and money did we waste fixing live issues that we’d introduced by just not having the right processes and systems in place. And by systems, of course, I’m talking about Continuous Integration. I can’t put a value on it but I can tell you we wasted LOTS of money. We even had bugfix teams dedicated to fixing issues we’d introduced and not caught earlier (due in part to a lack of C.I.).

Conclusion

While for many companies C.I. is old news, there are still plenty of people yet to get on board. It can be hard for people to see how C.I. can really make that much of a difference, so hopefully this blog will help to highlight some of the benefits and explain how C.I. has been adopted as one of the most important and central tenets of modern software delivery.

For me, and for many others, Continuous Integration is a MUST.