Last night I was taken to the London premier of Warren Miller’s latest film called “Flow State”. Free beers, a free goodie bag and an hour and a half of the best snowboarders and skiers in the world doing tricks which in my head I can also do, but in reality are about 10000000% better than anything I can manage. Good times. Anyway, on my way home I was thinking about “flow” and how it applies to DevOps. It’s a tricky thing to maintain in an Ops capacity. It reminded me of a talk I went to last week where the speakers talked of the importance of “Flow” in their project, and it’s inspired me to write it up:

Thoughtworkers Pat and Aleksander have been working at a top secret location* for a top secret company** on a top secret mission to implement continuous delivery in a corporate Windows world***

* Ok, I actually forgot to ask where it was located

** It’s probably not a secret, but they weren’t telling me

*** It’s obviously not a secret mission seeing as how: a) it was the title of their talk and b) I just said what it was

Pat and Aleksander put their collective Powerpoint skills to good use and made a presentation on the stuff they’d done and learned during their time working on this top secret project, but rather than call their presentation “Stuff We Learned Doing a Project” (that’s what I would have named it) they decided to call it “Experience Report: Continuous Delivery in a Corporate Windows World”, which is probably why nobody ever asks me to come up with names for their presentations.

This talk was at Skills Matter in London, and on my way over there I compiled a list of questions which I was keen to hear their answers to. The questions were as follows:

- What tools did they use for infrastructure deployments? What VM technology did they use?

- How did they do db deployments?

- What development tools did they use? TFS?? And what were their good/bad points?

- Did they use a front-end tool to manage deployments (i.e. did they manage them via a C.I. system)?

- Was the company already bought-in to Continuous Delivery before they started?

- What breed of agile did they follow? Scrum, Kanban, TDD etc.

- What format were the built artifacts? Did they produce .msi installers?

- What C.I. system did they use (and why did they decide on that one)?

- Did they use a repository tool like Nexus/Artifactory?

- If they could do it all again, what would they do differently?

During the evening (mainly in the pub afterwards) Pat and Aleksander answered almost all of the questions above, but before I list them, I’ll give a brief summary of their talk. Disclaimer: I’m probably paraphrasing where I’m quoting them, and most of the content is actually my opinion, sorry about that. And apologies also for the completely unrelated snowboarding pictures.

Cultural Aspects of Continuous Delivery

Although CD is commonly associated with tools and processes, Pat and Aleksander were very keen to push the cultural aspects as well. I couldn’t agree more with this – for me, Continuous Delivery is more than just a development practice, it’s something which fundamentally changes the way we deliver software. We need to have an extremely efficient and reliable automated deployment system, a very high degree of automated testing, small consumable sized stories to work from, very reliable environment management, and a simplified release management process which doesn’t create problems than it solves. Getting these things right is essential to doing Continuous Delivery successfully. As you can imagine, implementing these things can be a significant departure from the traditional software delivery systems (which tend to rely heavily on manual deployments and testing, as well as having quite restrictive project and release management processes). This is where the cultural change comes into effect. Developers, testers, release managers, BAs, PMs and Ops engineers all need to embrace Continuous Delivery and significantly change the traditional software delivery model.

FACT BOMB: Some snowboarders are called Pat. Some are called Aleksander.

Tools

When Aleksander and Pat started out on this project, the dev teams were already using TFS as a build system and for source control. They eventually moved to using TeamCity as a Continuous Integration system, and Git-TFS as the devs primary interface to the source control system.

The most important tool for a snowboarder is a snowboard. Here’s the one I’ve got!

- Builds were done using msbuild

- They used TeamCity to store the build artifacts

- They opted for Nunit for unit testing

- Their build created zip files rather than .msi installers

- They chose Specflow for stories/spec/acceptance criteria etc

- Used Powershell to do deployments

- Sites were hosted on IIS

Practices

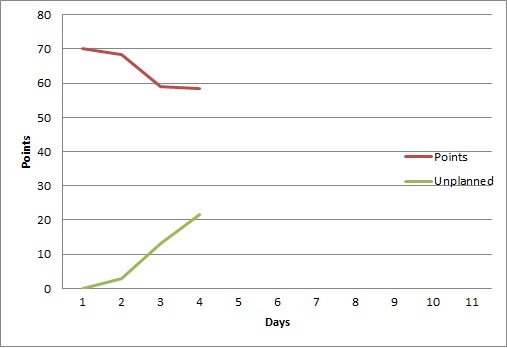

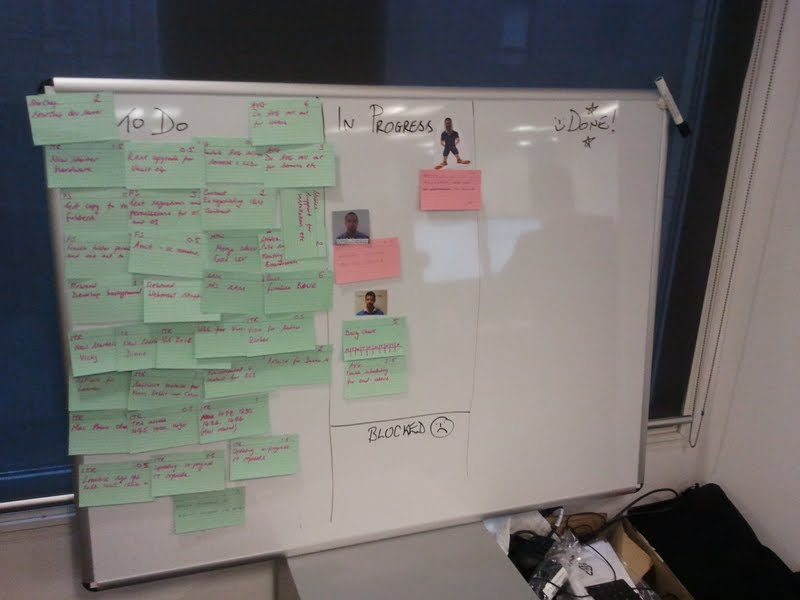

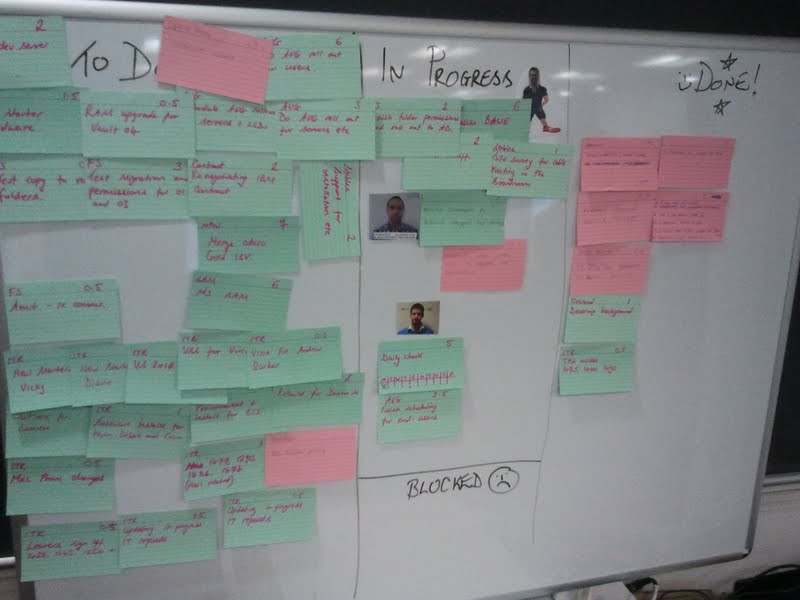

“Work in Progress” and “Flow” were the important metrics in this project by the sounds of things (suffice to say they used Kanban). I neglected to ask them if they actually measured their flow against quality. If I find out I’ll make a note here. Anyway, back to the project… because of the importance of Work in Progress and Flow they chose to use Kanban (as mentioned earlier) – but they still used iterations and weekly showcases. These were more for show than anything else: they continued to work off a backlog and any tasks that were unfinished at the end of one “iteration” just rolled over to the next.

Another point that Pat and Aleksander stressed was the importance of having good Business Analysts. They were essential in carving up stories into manageable chunks, avoiding “analysis paralysis”, shielding the devs from “fluctuating functionality” and ensuring stories never got stuck for too long. Some other random notes on their processes/practices:

- Used TDD with pairing

- Testers were embedded in the team

- Maintained a single branch of code

- Regression testing was automated

- They still had to raise a release request with Ops to get stuff deployed!

- The same artifact was deployed to every environment*

- The same deploy script was used on every environment*

* I mention these two points because they’re oh-so important principles of Continuous Delivery.

Obviously I approve of the whole TDD thing, testers embedded in the team, automated regression testing and so on, but not so impressed with the idea of having to raise a release request (manually) with Ops whenever they want to get stuff deployed, it’s not very “devops” 🙂 I’d seek to automate that request/approval process. As for the “single branch of code”, well, it’s nice work if you can get it. I’m sure we’d all like to have a single branch to work from but in my experience it’s rarely possible. And please don’t say “feature toggling” at me.

One area the guys struggled with was performance testing. Firstly, it kept getting de-prioritised, so by the time they got round to doing it, it was a little late in the day, and I assume this meant that any design re-considerations they might have hoped to make could have been ruled out. Secondly, they had trouble actually setting up the load testing in Visual Studio – settings hidden all over the place etc.

Infrastructure

Speaking with Pat, he was clearly very impressed with the wonders of Powershell scripting! He said they used it very extensively for installing components on top of the OS. I’ve just started using it myself (I’m working with Windows servers again) and I’m very glad it exists! However, Aleksander and Pat did reveal that the procedure for deploying a new test environment wasn’t fully automated, and they had to actually work off a checklist of things to do. Unfortunately, the reality was that every machine in every environment required some degree of manual intervention. I wish I had a bit more detail about this, I’d like to understand what the actual blockers were (before I run into them myself), and I hate to think that Windows can be a real blocker for environment automation.

Anyway, that’s enough of the detail, let’s get to the answers to the questions (I’ve added scores to the answers because I’m silly like that):

- What tools did they use for infrastructure deployments? What VM technology did they use? – Powershell! They didn’t provision the actual VMs themselves, the Ops team did that. They weren’t sure what tools they used. 1

- How did they do db deployments? – Pass 0

- What development tools did they use? TFS?? And what were their good/bad points? – TFS. Source Control and C.I. were bad so they moved to TeamCity and Git-TFS 2

- Did they use a C.I. tool to manage deployments? – Nope 0

- Was the company already bought-in to Continuous Delivery before they started? – They hired ThoughtWorks so I guess they must have been at least partly sold on the idea! Agile adoption was on their roadmap 1

- What breed of agile did they follow? Scrum, Kanban, TDD etc. – TDD with Kanban 2

- What format were the built artifacts? Did they produce .msi installers? – Negatory, they used zip files like any normal person would. 2

- What C.I. system did they use (and why did they decide on that one)? – TeamCity, which is interesting seeing as how ThoughtWorks Studios produce their own C.I. system called “Go”. I’ve used Go before and it’s pretty good conceptually, but it’s also expensive and hard to manage once you’re running over 50 builds and 100 test agents. The UI is buggy too. However, it has great features, but the Open Source competitors are catching up fast. 2

- Did they use a repository tool like Nexus/Artifactory? – They used TeamCity’s own internal repo, a bit like with Jenkins where you can store a build artifact. 1

- If they could do it all again, what would they do differently? – They wouldn’t push so hard for the Git TFS integration, it was probably not worth the considerable effort at the end of the day. 1

TOTAL: 12

What does this total mean? Absolutely nothing at all.

What significance do all the snowboard pictures have in this article? None. Absolutely none whatsoever.