Continuous Delivery in Practice

A couple of months ago I was fortunate enough to be invited to the Thoughtworks live 2011 event in London. The main topics of this event were agile (as you’d expect from Thoughtworks) and Continuous Delivery. The event was run over 2 days, the first day included talks from Jez Humble and Martin Fowler, as well as a number of other speakers (Dave West did a session on agile vs the rest,

A couple of months ago I was fortunate enough to be invited to the Thoughtworks live 2011 event in London. The main topics of this event were agile (as you’d expect from Thoughtworks) and Continuous Delivery. The event was run over 2 days, the first day included talks from Jez Humble and Martin Fowler, as well as a number of other speakers (Dave West did a session on agile vs the rest,  Bjarte Bogsnes presented a very interesting session called “Beyond Budgeting”, and Scott Durchslag was guest speaking about how agile has worked at Expedia, and his interest in Continuous Delivery). My colleague Steve Morgan was also in attendance on the first day and has produced this excellent post on the contents of day 1. I won’t say much more about the first day because Steve’s post covers it better than I ever could (he cheated by taking notes/paying attention). However, I will just add that it was great to see so many people who genuinely seemed passionate about the evolution of agile, and the coffee breaks were a great opportunity to pick the brains of Messrs Fowler and Humble. I particularly enjoyed the “Beyond Budgeting” talk from Bjarte, and am happy to say he’s written a book about it, which you can buy on the internets!

Bjarte Bogsnes presented a very interesting session called “Beyond Budgeting”, and Scott Durchslag was guest speaking about how agile has worked at Expedia, and his interest in Continuous Delivery). My colleague Steve Morgan was also in attendance on the first day and has produced this excellent post on the contents of day 1. I won’t say much more about the first day because Steve’s post covers it better than I ever could (he cheated by taking notes/paying attention). However, I will just add that it was great to see so many people who genuinely seemed passionate about the evolution of agile, and the coffee breaks were a great opportunity to pick the brains of Messrs Fowler and Humble. I particularly enjoyed the “Beyond Budgeting” talk from Bjarte, and am happy to say he’s written a book about it, which you can buy on the internets!

The only other thing I would say about day 1 was that there was a reasonably good choice of biscuits. Biscuit variety is important and mustn’t be underestimated.

Day 2

Day 2 was somewhat more hands on than the first day, and also more tools-oriented. It started off with another session from Jez Humble and Martin Fowler, where they discussed continuous delivery, covering some of the contents of Jez’s book, and also going into detail about branching (feature branching vs Continuous Integration). The sessions then moved on to cover some of the products being produced at Thoughtworks Studios. There were talks from Andy Kemp and Suzie Prince about their products Mingle and Twist. Mingle is marketed as an agile project management tool. I’ve not used it myself but it seems to be based around shared workspaces and good requirements tracking. As you’d expect, it seems to integrate well with the other Thoughtworks products, which is probably one of its main advantages. Twist is a functional testing platform, which is very agile-centric in that it allows you to run requirements specs as tests in the “As a user, I want to…” style. Again it’s the integration with the other tools and products that made it look good to me. I’ve used a lot of agile tools recently, which all seem to be great on their own, but putting them all together so that I can track a requirement through to a test and a change through to a build can be a lot of hard work. The final product that they presented was Go, which is their Continuous Integration system, and they even showed us how they used Go to deliver builds of Mingle, Twist and even Go itself (talk about eating your own dogfood).

The sessions then moved on to cover some of the products being produced at Thoughtworks Studios. There were talks from Andy Kemp and Suzie Prince about their products Mingle and Twist. Mingle is marketed as an agile project management tool. I’ve not used it myself but it seems to be based around shared workspaces and good requirements tracking. As you’d expect, it seems to integrate well with the other Thoughtworks products, which is probably one of its main advantages. Twist is a functional testing platform, which is very agile-centric in that it allows you to run requirements specs as tests in the “As a user, I want to…” style. Again it’s the integration with the other tools and products that made it look good to me. I’ve used a lot of agile tools recently, which all seem to be great on their own, but putting them all together so that I can track a requirement through to a test and a change through to a build can be a lot of hard work. The final product that they presented was Go, which is their Continuous Integration system, and they even showed us how they used Go to deliver builds of Mingle, Twist and even Go itself (talk about eating your own dogfood).

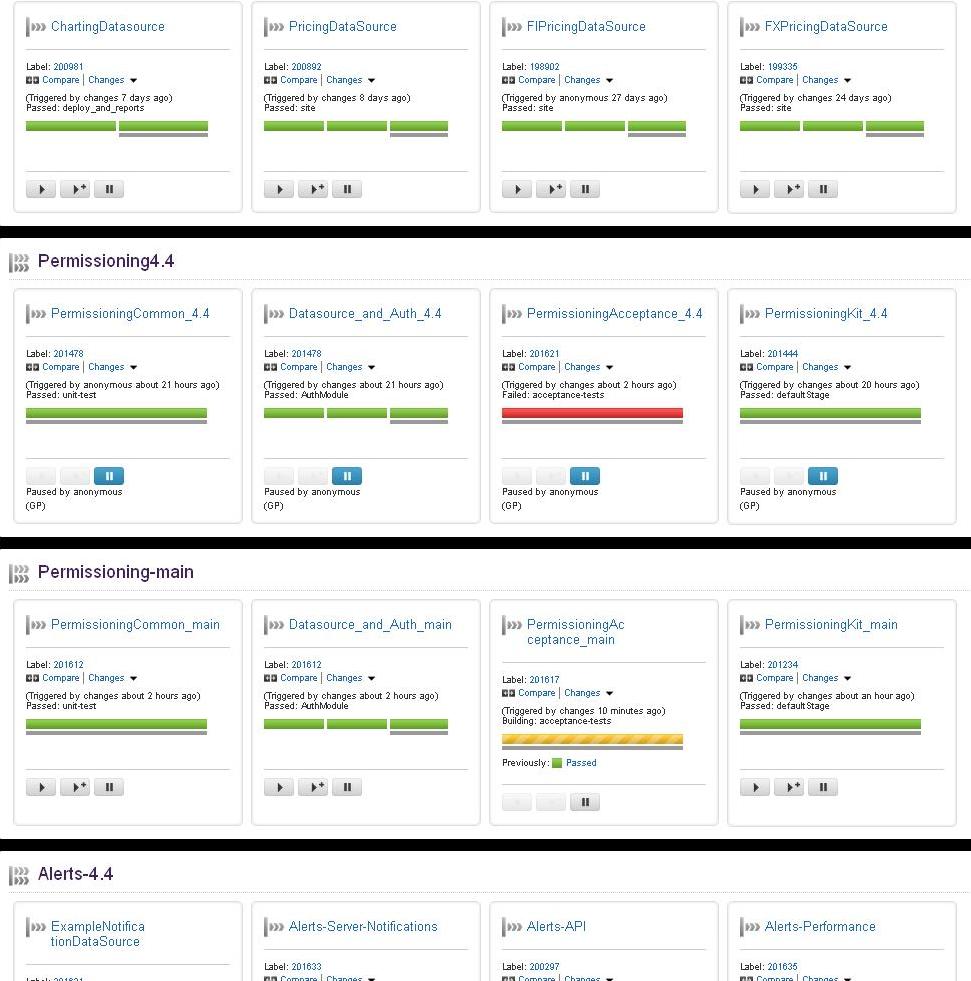

Go ci implementation at caplin systems

The centrepiece of day 2 though was of course my very own talk on how we at Caplin Systems are using Go in our Continuous Delivery system 🙂 I was invited to present a session on how Go is helping us achieve our Continuous Integration goals, and how we are using it to implement our own brand of Continuous Delivery. I’ve embedded my presentation above – sorry it’s not a video. I won’t go into too much detail (I’ll save you the lecture), but basically we’re using build pipelines along with a high degree of automated tests to deliver builds which are suitable for deployment. The other feature I have to mention is how Go manages multiple agents. We have about 60 active agents which Go manages. A build can be farmed out to any of these 60 agents, and if a particular resource is needed (let’s say I need to run a test on a particular OS, like Centos) then Go can be configured to send the builds to the right agents. Particular builds can be configured to be excluded from certain agents, meaning the system is highly configurable.

In the afternoon we had an open-space session, during which I spent most of my time with a group discussing database deployments and how to bring db changes under Continuous Integration. It seems there are still a lot of people around who feel that this aspect is largely overlooked with respect to Continuous Integration, and this was reflected in the way people were talking about using manual db compare tools as a way of deploying db changes to production. My own feelings on this are that database changes should be treated more like code changes, with each change scripted and deployed as a code change would be. There needn’t be destructive changes, and a good set of test data should help catch the issues that are often only found in production.