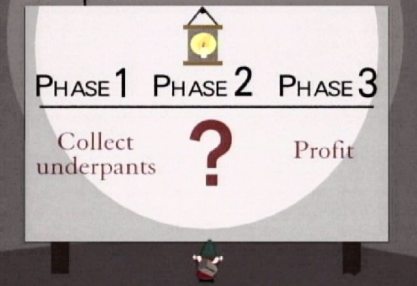

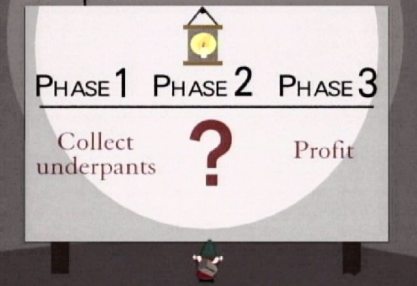

All good plans come in 3 phases:

Although I won’t be collecting any underpants, I’ll be following this basic template (with a couple of tweaks here and there) during an Agile adoption initiative I’m currently working on.

In the South Park episode (from which I have taken the picture above) the boys discover a bunch of underpant-stealing gnomes, who are collecting underpants as part of a grand plan to make profit. The gnomes claim to be business experts but none of them appears to know what phase 2 of the plan is. All they know is that their business model is based on collecting underpants, and so that’s what they’ll do.

Unfortunately, I have been witness to a couple of attempts at adopting agile which weren’t very dissimilar to the underpants gnomes’ business plan. Namely, a business starts “Adopting Agile”, usually driven by the development team, where they start doing stand-ups and using a sprint board (this is phase 1) and somehow they are surprised when this doesn’t suddenly start producing profit. Clearly, “becoming Agile” isn’t as simple as that.

Phase 1 – Collect business reasons (not underpants)

So you’re going Agile. Presumably you’ve determined that this is what you want, and what your customers need. If you haven’t done this yet then stop right there and ask yourself “Do I Need Go Agile?”. The answer might be “no”, but does needing to go Agile have to be the only reason? Maybe you just want to go agile to see what the fuss is all about, or to make your business more attractive to potential new employees.

So lets assume we’re going agile, and you have valid business reasons to do so. My first suggestion would be to make those business reasons highly visible. You have to outline the existing issues and how Agile can help to fix them. Mitchell and Webb once did a sketch about a toothbrush company who had to try to think of some gimmick to add to their toothbrushes in order to keep increasing their sales. They came up with the idea of “dirty tongue”. This is where microscopic “tonguanoids” build up, and basically result in social exclusion and a lack of sex. Their solution: to put bristles on the other side of the toothbrush so that people can brush their tongues while they brush their teeth. People will buy these toothbrushes despite the fact that “brushing your tongue makes you retch, everybody knows that”. The point I’m making, very badly, is that it’s a lot easier to sell things if people think that what they’re buying into will fix some very real, tangible issue.

The same goes with Agile. To get the buy-in you need to make your agile adoption a success, you’ll need to identify how “going Agile” is going to make life better for everyone concerned.

If the problem you’ve got is that you never ship software on time, or you constantly fail to deliver what the customer wants, then it’s fairly easy to “sell” agile as the solution. The concept of sprints are a doddle for everyone to understand, and they’ll love the idea that the customer will have regular interactions with the development team, and get to see regular progress in the demos. “Of course!” they’ll say “It’s so obvious, why didn’t I think of that before”. The business should easily be able to see how short, sharp sprints with an emphasis on “working software” will make it easier to deliver what the customer wants, and manage their expectations of when it’ll be ready.

But what if those aren’t the problems you need to solve?

What if your problem is quality? How do you convince the business that Agile will result in a higher quality product? It’s not quite so easy. Agile itself won’t deliver better quality, but the good practices you’ll have to implement in order to successfully be agile will help to improve your quality. I was thinking about this the other day because it’s exactly the problem I was faced with.

Agile isn’t going to make it easier to reliably test our software. But to be agile, we need to be able to build and deploy our project rapidly so that we can test it right there and then, not tomorrow, not next week, but right now, so that the testers and devs can work in tandem, building features and signing them off and moving on to the next one. We have to facilitate this in order to be agile, so as a byproduct of going agile we might have to invest in creating a new build and deployment system. And it has to be quick so it’ll have to be automated.

So we have an automated build and deployment system, but to be able to reliably test our features we’ll have to make sure the environments are reliable. We can throw people at this problem and dedicate a team to making sure our environments are clean and regularly audited, or we can automate all that as well!  Fortunately there are numerous tools and good practices we can follow to do this, just take a look at Chef, Puppet, Vagrant, and VMWare as examples of tools for automating deployments of virtual machines, and the concepts of “infrastructure as code” for good practices. (of course, if your hardware isn’t already virtualised the first thing to do is see whether it can be, and if it really, honestly can’t, then look at tools like Norton Ghost and Powershell for ways of automating as much as you can).

Fortunately there are numerous tools and good practices we can follow to do this, just take a look at Chef, Puppet, Vagrant, and VMWare as examples of tools for automating deployments of virtual machines, and the concepts of “infrastructure as code” for good practices. (of course, if your hardware isn’t already virtualised the first thing to do is see whether it can be, and if it really, honestly can’t, then look at tools like Norton Ghost and Powershell for ways of automating as much as you can).

“Agile” and “Improved Quality” might not be the most obvious bed partners, but the journey to becoming agile almost forces you to take steps which will naturally go towards improving your quality.

Hopefully you’ll have enough “sales material” to put forward a great case for agile – you can deliver exactly what the customer wants, to a higher quality, and you can manage their expectations in a way you could never do before. And that’s just scratching the surface of what Agile can do for a business, but for the purposes of keeping this post to a reasonable length, I’ll leave it at those 3 things!

Phase 2 – Pick the most appropriate project, and start doing Scrum

The sales pitch is over and now it’s time to start doing stuff. Make your life a lot easier by picking a project that has as many of the following features as possible:

- Smart developers and testers

- Isn’t suffering from a tonne of technical debt

- Has users who are happy to get involved in early & regular feedback

- Is small, new or yet to begin

If you’re taking on an existing project, a good idea at this point is to benchmark your existing processes. Consider trying to measure the following:

- How long does it take to get a single change from request through to production deployment?

- How much time and money does it cost to fix an issue on production?

- How many bugs do you typically find on your production code every month?

- How often do you deliver features that don’t satisfy the customer?

- How often do you deliver features after the deadline?

Measuring some of the things above is clearly non-trivial, but if you can find these stats somewhere, they’ll be very useful benchmarks for you in the future. When you can demonstrate that all of these metrics are improved in your new Agile process, god-like status will soon follow.

Guess who just delivered a release on time…

Ohhhh Yeeeeaaaaahhhh!

I recommend doing Scrum because it’s simple and has the most support in terms of people with experience, material (books, courses etc), and tools. It’s a good “framework” to get you started, and once you’ve had success, you can evolve into other methods, or incorporate them into Scrum (such as BDD, TDD etc).

Here’s one of many great books to get you started on your agile journey

At about this point you’ll need to do some brainwashing training. The concept of doing analysis, design, development and testing all at the same time is going to sound absolutely bonkers to some people. Try your best to explain it to them, but don’t waste too much time on this – just crack on and make a start!

Most people will enjoy the experience of working in this “new” way, and the first few sprints will probably benefit from the fact that everyone is performing better simply because they feel more invigorated. Use this opportunity to promote scrum across the organisation.

In this phase, always maintain a focus on “the business” and not just on the technical team. It’s important that the business feels part of this new process or they’ll just see it as some crazy dev thing which doesn’t really affect them, and they won’t try to understand it. Business people might refer to this as “Promoting Synergy”, which I’ve just shoe-horned into this post so that I can add a picture from Lonely Island’s “Like A Boss” video. However, I do like to make a point of always highlighting the extra business value we’re delivering, and make sure the Product Managers (soon to be “Product Owners”) are involved all the way. They represent the traditional link between the customers and what we’re delivering, and so it’s essential that they understand the benefits of agile.

Promote Synergy!

I was recently asked about the impact of “going agile” on a project’s release schedule, and when we would be able to deliver the features we’ve promised to the customers. It’s difficult to explain that we no longer know when we’ll deliver stuff, but at some point, people will have to realise that this is the wrong question. I prefer the idea of a rolling roadmap, which is continually reviewed and updated (as often as you can afford to do it, really). Rolling Roadmaps give the business, as well as the customers a good idea of our intentions, but it is very different to fixed dates on a release schedule, or a traditional yearly roadmap. Of course, everyone needs to understand that the main driver for our deliverables will be the customers, and what the customer wants will usually change over a shorter period than you expect. So for your new “Agile” project, try to work towards implementing a rolling roadmap culture, and move away from long-term fixed delivery dates (if you can).

One final note on Phase 2: Make it fun, and make it different.

Phase 3 – Improve

Agile promotes “fast feedback loops” all over the place: in development we get fast feedback on our code through Continuous Integration, with BDD we get fast feedback to the Product Owners/BAs and of course with our more frequent releases we get faster feedback from the customers. And so it is with our Agile processes as a whole. With short sprints and the clever use of retrospectives we can continually tinker with our fine tuning to see if we can improve our quality and velocity. Look at areas you can try to improve, change something and then see if your change has had a positive impact at the end of the sprint. This is basically the concept behind Deming’s Shewhart Cycle:

Deming actually preferred “Plan, Do, Study, Act”, whereas I myself prefer “Plan, Do, Measure, Act”. The reason I prefer this is because it implies the use of quantifiable metrics to base our actions on, rather than some other non-quantifiable observations. Anyways, the point is that after agile is applied, you should keep looking at ways to continuously improve. This is key to keeping everyone feeling fresh and invigorated, helps us to learn from our mistakes, and encourages innovation.

Deming actually preferred “Plan, Do, Study, Act”, whereas I myself prefer “Plan, Do, Measure, Act”. The reason I prefer this is because it implies the use of quantifiable metrics to base our actions on, rather than some other non-quantifiable observations. Anyways, the point is that after agile is applied, you should keep looking at ways to continuously improve. This is key to keeping everyone feeling fresh and invigorated, helps us to learn from our mistakes, and encourages innovation.

So there you go, Agile delivered in 3 well easy steps. It shouldn’t take you much longer than an afternoon. Ok, it might take a bit longer but if you’re looking for a 30,000 foot overview of a simple 3-phase approach, then you could do a lot worse than apply the principles of “Sell the Agile Idea, Pick the Best Project, and Keep Improving”.