8 Principles of Continuous Delivery

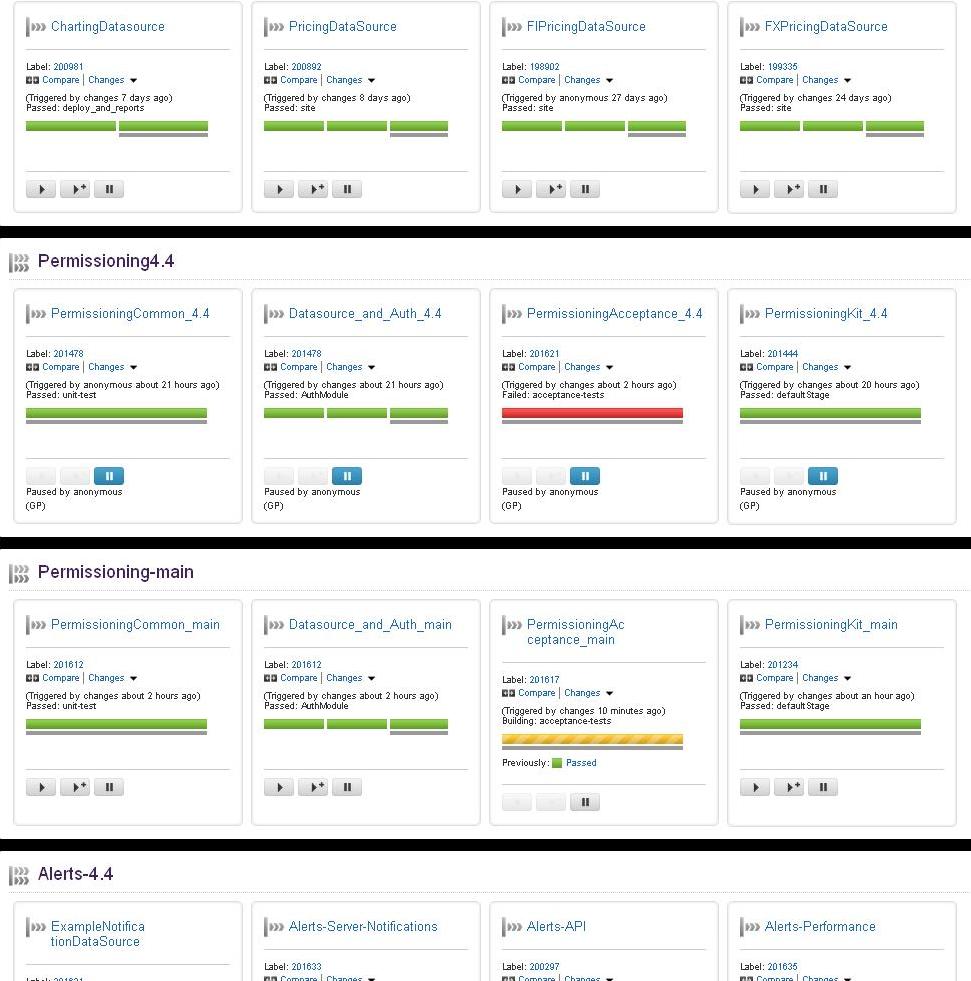

Dave Farley co-authored “Continuous Delivery”, an excellent book in the Martin Fowler signature series, which goes into great detail about the evolution of Continuous Integration, and how to achieve continuous delivery (or continuous deployment) using “build pipelines”.

Dave Farley co-authored “Continuous Delivery”, an excellent book in the Martin Fowler signature series, which goes into great detail about the evolution of Continuous Integration, and how to achieve continuous delivery (or continuous deployment) using “build pipelines”.

I went along to hear Dave Farley give a talk on Continuous Delivery and how they’re doing it where he works, at LMAX. It was a really great session and he managed to cover, in quite a short session, a great deal of content from in the book. I’ve put together a highlight of what he covered in the talk, mixed with my own take on things

Here’s what I learned…

Continuous delivery is basically the logical extension of Continuous Integration – it’s a more holistic solution than C.I. though, as it encapsulates a lot more than just the development of software.

For instance, continuous delivery focuses a lot more on requirements than C.I. ever did, and involves a great deal more people on the delivery chain than traditional C.I. as well. It also has a greater customer focus than C.I.

Now, here’s something I didn’t know about continuous delivery…

There are 12 principles behind the agile manifesto. the first of which is:

Our highest priority is to satisfy the customer through early and continuous delivery of valuable software

Well who’d have thought it? Continuous delivery was mentioned waaaaay back in the days of the agile manifesto, some 2500 years BC* and yet for most of us it seems like a pretty new idea.

Continuous delivery is based on the use of smart automation. This is all about creating a repeatable and reliable process for delivering software. You have to automate pretty much everything in order to be able to achieve continuous delivery. manual steps will get in the way or become a bottleneck. This goes for everything from requirements authoring to deploying to production.

The focus is on the finished article – again, this is described as being:

Working software in the hands of the user

Because the focus is on the software in the hands of the user, there’s less tendency from a developers perspective, to simply chuck software over the wall to the QA team, and similarly to the Netops/production team.

Continuous delivery is all about getting that product out there, and getting the feedback from the users. This might mean delivering “unfinished” demo software during your development iterations, and getting your users to give valuable early feedback, or it might mean deploying experimental software to a website cluster and tracking how successful this new site is as compared to the existing system. Either way, it’s all about feedback loops. Essentially you want to have as rapid a feedback loop with your users as possible.

Feedback loops are familiar to everyone who has worked on a Continuous Integration system. In C.I. feedback loops are generally about getting test feedback (unit test, acceptance test, performance test etc) as quickly as possible – “Fail Fast” – as you’ve probably heard.

Continuous Delivery, as described, takes this idea to it’s logical conclusion, and gets the users involved in the feedback loop. This is a good example of how Continuous Delivery is more holistic than its C.I. predecessor. In Continuous Delivery, the feedback loop provides feedback not only on the quality of your code, but on the quality of your requirements, and the quality of your processes for delivering software.

8 Principles of Continuous Delivery

- The process for releasing/deploying software MUST be repeatable and reliable. This leads onto the 2nd principle…

- Automate everything! A manual deployment can never be described as repeatable and reliable (not if I’m doing it anyway!). You have to invest seriously in automating all the tasks you do repeatedly, and this tends to lead to reliability.

- If somethings difficult or painful, do it more often. On the surface this sounds silly, but basically what this means is that doing something painful, more often, will lead you to improve it, probably automate it, and this will eventually make it painless and easy. Take for example doing a deployment of a database schema. If this is tricky, you tend to not do it very often, you put it off, maybe you’ll do 1 a month. Really what you should do is improve the process of doing the schema deployments, get good at it, and do it more often, like 1 a day if needed. If you’re doing something every day, you’re going to be a lot better at it than if you only do it once a month.

- Keep everything in source control – this sounds like a bit of a weird one in this day and age, I mean seriously, who doesn’t keep everything in source control? Apparently quite a few people. Who knew?

- Done means “released”. This implies ownership of a project right up until it’s in the hands of the user, and working properly. There’s none of this “I’ve checked in my code so it’s done as far as I’m concerned”. I have been fortunate enough to work with some software teams who eagerly made sure their code changes were working right up to the point when their changes were in production, and then monitored the live system to make sure their changes were successful. On the other hand I’ve worked with teams who though their responsibility ended when they checked their code in to the VCS.

- Build quality in! Take the time to invest in your quality metrics. A project with good, targeted quality metrics (we could be talking about unit test coverage, code styling, rules violations, complexity measurements – or preferably, all of the above) will invariably be better than one without, and easier to maintain in the long run.

- Everybody has responsibility for the release process. A program running on a developers laptop isn’t going to make any money for the company. Similarly, a project with no plan for deployment will never get released, and again make no money. Companies make money by getting their products released to customers, therefore, this process should be in the interest of everybody. Developers should develop projects with a mind for how to deploy them. Project managers should plan projects with attention to deployment. Testers should test for deployment defects with as much care and attention as they do for code defects (and this should be automated and built into the deployment task itself).

- Improve continuously. Don’t sit back and wait for your system to become out of date or impossible to maintain. Continuous improvement means your system will always be evolving and therefore easier to change when needs be.

To go with these principles there are also:

4 Practices of Continuous Delivery

- Build binaries only once. You’d be staggered by the number of times I’ve seen people recompile code between one environment and the next. Binaries should be compiled once and once only. The binary should then be stored someplace which is accessible only to your deployment mechanism, and your deployment mechanism should deploy this same binary to each successive environment…

- Use precisely the same mechanism to deploy to every environment. It sounds obvious, but I’ve genuinely seen cases where deployments to QA were automated, only for the production deployments to be manual. I’ve also seen cases where deployments to QA and production were both automated, but in 2 entirely different languages. This is obviously the work of mad people.

- Smoke test your deployment. Don’t leave it to chance that your deployment was a roaring success, write a smoke test and include that in the deployment process. I also like to include a simple diagnostics test, all it does it check that everything is where it’s meant to be – it compares a file list of what you expect to see in your deployment against what actually ends up on the server. I call it a diagnostics test because it’s a good first port of call when there’s a problem.

- If anything fails, stop the line! Throw it away and start the process again, don’t patch, don’t hack. If a problem arises, no matter where, discard the deployment (i.e. rollback), fix the issue properly, check it in to source control and repeat the deployment process. A lot of people comment that this is impossible, especially if you’ve got a tiny outage window to deploy things to your live system, or if you do your production changes are done in the middle of the night while nobody else is around to fix the issue properly. I would say that these arguments rather miss the point. Firstly, if you have only a tiny outage window, hacking your live system should be the last thing you want to do, because this will invalidate all your other environments unless you similarly hack all of them as well. Secondly, the next time you do a deployment, you may reintroduce the original issue. Thirdly, if you’re doing your deployments in the middle of the night with nobody around to fix issues, then you’re not paying enough attention to the 7th principle of Continuous Delivery – Everybody has responsibility for the release process. Unless you can’t avoid it, I wouldn’t recommend doing releases when there’s the least amount of support available, it simply goes against common sense.

* Approximate date.