DevOps Scrum Framework

Imagine this hypothetical conversation I didn’t have with someone last week…

THEM: “Is there a DevOps framework?”

ME: “Noooooo, it doesn’t work like that”

THEM: “Why?”

ME: “Well DevOps is more like a philosophy, or a set of values and principles. The way you apply those principles and values varies from one organisation to the next, so a framework wouldn’t really work, especially if it was quite prescriptive, like Scrum”

THEM: “But I really want one”

ME: “Ok, I’ll tell you what, I’ll hack an existing framework to make it more devopsy, does that work for you?”

THEM: “Take my money”

So, as you can see, in a hypothetical world, there is real demand for a DevOps framework. The trouble with a DevOps framework, as is always the problem with anything to do with DevOps, nobody can actually agree what the hell DevOps means, so any framework is bound to upset a whole bunch of people who simply disagree with my assumption of what DevOps means.

So, with that massive elephant in the room, I’m just going to blindly ignore it and crash on with this experimental little framework I’m calling DevOpScrum.

Look, I know I don’t have a talent for coming up with cool names for frameworks (that’s why I’d never make it in the JavaScript world), but just accept DevOpScrum into your lives for 10 minutes, and try not to worry about how crap the name is.

In my view (which is obviously the correct view) DevOps is a lot more than just automation. It’s not about Infrastructure as Code and Containers and all that stuff. All that stuff is awesome and allows us to do things in better and faster ways than we ever could before, but it’s not the be-all-and-end-all of DevOps. DevOps for me is about the way teams work together to extract greater business value, and produce a better quality solution by collaborating, working as an empowered team, and not blaming others (and also playing with cool tools, obvs). And if DevOps is about “the way teams work together” then why the hell shouldn’t there be a framework?

The best DevOps framework is the one a team builds itself, tailored specifically for that organisation’s demands, and sympathetic to its constraints. Incidentally, that’s one reason why I like Kanban so much, it’s so adaptable that you have the freedom to turn it into whatever you want, whereas scrum is more prescriptive, and if you meddle with it you not only confuse people, you anger the Scrum gods. However, if you don’t have time to come up with your own DevOps framework, and your familiar with Scrum already, then why not just hack the Scrum framework and turn it into a more DevOps-friendly solution?

Which brings us nicely to DevOpScrum, a DevOps Framework with all the home comforts of Scrum, but with a different name so as not to offend Scrum purists.

The idea with DevOpScrum is to basically extend an existing framework and insert some good practices that encourage a more operational perspective, and encourage greater collaboration between Dev and Ops.

How does it work?

Start by taking your common-or-garden Scrum framework, and then add the following:

Infrastructure/Ops personnel

Operability features on the backlog

A definition of Done that includes “deployable, monitored, scalable” and so on (i.e doesn’t just focus on “has the product feature been coded?”)

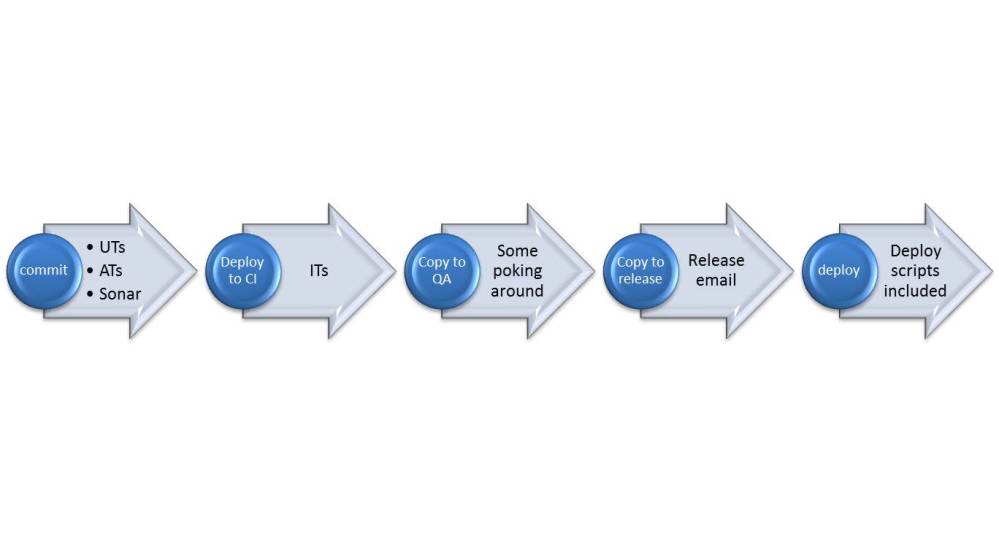

Continuous Delivery as a mandatory practice!

And there you have it. A scrum-based DevOps Framework.

Let’s look into some of the details…

We’ll start with The Team…

A product owner (who appreciates operability – what we once called “Non-Functional Requirements in the olden days. That term is so not cool anymore. It’s less cool than bumbags).

Bumbags – uncool, but still cooler than the term “non-functional requirements”

Devs, Testers, BAs, DBAs and all the usual suspects.

Infrastructure/Ops people. Some call them DevOps people these days. These are people who know infrastructure, networking, the cloud, systems administration, deployments, scalability, monitoring and alerting – that sort of stuff. You know, the stuff Scrum forgot about.

Roles & Responsibilities

Pretty similar to scrum, to be fair. The Product Owner has ultimate responsibility for deciding priorities and is the person you need to lobby if you think your concerns need to be prioritised higher. For this reason, the Product Owner needs to understand the importance of Operability (i.e the ability to deploy, scale, monitor, maintain and so on), which is why I recommend Product Owners in a DevOps environment get some good DevOps training (by pure coincidence we run a course called “The DevOps Product Owner” which does exactly what I just described! Can you believe that?!).

There’s no scrum master in this framework, because it isn’t scrum. There’s a DevOpScrum coach instead, who basically does the scrum master coach and is responsible for evangelising and improving the application of the DevOps values and principles.

DevOps Engineers – One key difference in this framework is that the team must contain the relevant infrastructure and Ops skills to get stuff done without relying on an external team (such as the Ops team or Infrastructure team). This role will have the skills to provide Continuous Delivery solutions, including deployment automation, environment provisioning and cloud expertise.

Sprints

Yep, there’s sprints. 2 weeks is the recommended length. Anything longer than that and it’s hardly a sprint, it’s a jog. Whenever I’ve worked in 3 week sprints in the past, I’ve usually seen people take it really easy in the first couple of weeks, because the end of the sprint seemed so far away, and then work their asses off in the final week to hit their commitments. It’s neither efficient nor sustainable.

Backlogs

Another big difference with scrum is that the Product Backlog MUST contain operability features. The backlog is no longer just about product functionality, it’s about every aspect of building, delivering, hosting, maintaining and monitoring your product. So the backlog will contain stories about the infrastructure that the application(s) run on, their availability rates, disaster recovery objectives, deployability and security requirements (to name just a few). These things are no longer assumed, or lie outside of the team – they are considered “first class citizens” so to speak.

I recommend twice-weekly backlog grooming sessions of about an hour, to make sure the backlog is up-to-date and that the stories are in good shape prior to Sprint Planning.

Sprint Planning

Because the backlog is different, sprint planning will be subtly different as well. Obviously we’ve got a broader scope of stories to cover now that we’ve got operational stories in the backlog, but it’s important that everyone understands these “features”, because without them, you won’t be able to deliver your product in the best way possible.

I encourage the whole team to be involved, as per scrum, and treat each story on merit. Ask questions and understand the story before sizing it.

Stories

I recommend INVEST as a guiding principle for stories. Don’t be tempted to put too much detail in a story if it’s not necessary. If you can get the information through conversation with people, and they’re always available, then don’t bother writing that stuff up in detail, it’s just wasting time and effort.

The difference between Scrum and DevOpScrum in respect to stories is that in DevOpScrum we expect to see a large number of stories not written from an end-user’s perspective. Instead, we expect to see stories written from an operation engineers perspective, or an auditor’s perspective, or a security and compliance perspective. This is why I often depart from the As a… I want… So that… template for non “user” stories, and go with a “What:… Why:…” approach, but it doesn’t matter all that much.

Stand-ups

Same as Scrum but if I catch anyone doing that tired old “what I did yesterday, what I’m doing today, blockers…” nonsense I’ll personally come and find you and make a really, really annoying noise.

Please come up with something better, like “here’s what I commit to doing today and if I don’t achieve it I’ll eat this whole family pack of Jelly Babies” or something. Maybe something more sensible than that. Maybe.

Retrospectives

At the end of your sprint, get together and work out what you’ve learned about the way you work, the technology and tools you’ve used, the product you’re working on and the general agile health of your team. Also take a look at how the overall delivery of your product is looking. Most importantly, ask yourself if you’re collaborating effectively, in a way that’s helping to produce a well-rounded product, that’s not only feature-rich but operationally polished as well.

Learn whatever you can and keep a record of what you’ve learnt. If any of these lessons can be turned into stories and put on the backlog as improvements, then go for it. Just make sure you don’t park all of your lessons somewhere and never visit them again!

Deliver Working Software

As with Scrum, in DevOpScrum we aim to deliver something every 2 weeks. But it doesn’t have to just be a shiny front-end to demo to your customers, you could instead deliver your roll-back, patching or Disaster Recovery process and demo that instead. Believe it or not, customers are concerned with that stuff too these days.

Continuous Delivery

I personally believe this should be the guiding practice behind DevOpScrum. If you’re not familiar with Continuous Delivery (CD) then Dave Farley and Jez Humble’s book (entitled Continuous Delivery, for reasons that become very obvious when you read it) is still just about the best material on the subject (apart from my blog, of course).

As with Continuous Integration, CD is more than just a tool, it’s a set of practices and behaviours that encourage good working practices. For example, CD requires high degrees of automation around testing, deployment, and more recently around server provisioning and configuration.

Summary

So there it is in some of its glory, the DevOpScrum framework (ok, it’s just a blog about a framework, there’s enough material here to write an entire book if any reasonable level of detail was required). It’s nothing more than Scrum with a few adjustments to make it more DevOps aligned.

As with Scrum, this framework has the usual challenges – it doesn’t cater for interruptions (such as production incidents) unless you add in a triage function to manage them.

There’s also a whole bunch of stuff I’ve not covered, such as release planning, burn-ups, burn-downs and Minimum Viable Products. I’ve decided to leave these alone as they’re simply the same as you’d find in scrum.

Does this framework actually work? Yes. The truth is that I’ve actually been working in this way for several years, and I know other teams are also adapting their scrum framework in very similar ways, so there’s plenty of evidence to suggest it’s a winner. Is it perfect? No, and I’m hoping that by blogging about it, other people will give it a try, make some adjustments and help it evolve and improve.

The last thing I ever wanted to do was create a DevOps framework, but so many people are asking for a set of guidelines or a suggestion for how they should do DevOps, that I thought I’d actually write down how I’ve been using Scrum and DevOps for some time, in a way that has worked for me. However, I totally appreciate that this worked specifically for me and my teams. I don’t expect it to work perfectly for everyone.

As a DevOps consultant, I spend much of my time explaining how DevOps is a set of principles rather than a set of practices, and the way in which you apply those principles depends very much upon who you are, the ways in which you like to work, your culture and your technologies. A prescriptive framework simply cannot transcend all of these things and still be effective. This is why I always start any DevOps implementation with a blank canvas. However, if you need a kick-start, and want to try DevOpScrum then please go about it with an open mind and be prepared to make adjustments wherever necessary.

![IMG_20150329_114207[1]](https://devopsnet.com/wp-content/uploads/2015/04/img_20150329_1142071.jpg?w=768&h=1024)